Retail Media Radar – March 2026: Everything you wanted to know about Agentic AI but were afraid to ask

You can’t move these days for talk of Artificial Intelligence and it’s impact on every area of commerce. AI models have spent the past two years learning how to generate convincing text, images and code.

But the next phase is about something different: enabling those systems to interact with data, tools and services directly: broadly known as Agentic AI.

A growing set of protocols is beginning to define how AI agents retrieve information, trigger workflows and execute tasks across the internet. That shift may sound technical, but it has immediate implications for search, discovery and commerce. If AI systems increasingly act on behalf of users, the question for brands and retailers becomes how those systems find, interpret and prioritise information in the first place.

This month’s Radar looks at the infrastructure emerging underneath that change, and why it may reshape how products, services and media are discovered in the years ahead.

What this means for retail media

From a retail media perspective, the implications sit around discoverability, data access and where influence is applied within the decision process.

If AI agents increasingly retrieve information and execute tasks directly, the way products and offers are surfaced begins to change. Instead of relying solely on visible placements within search results, apps or digital shelves, brands and retailers may need to ensure their data, catalogues and promotional signals are structured in ways that AI systems can retrieve and evaluate programmatically.

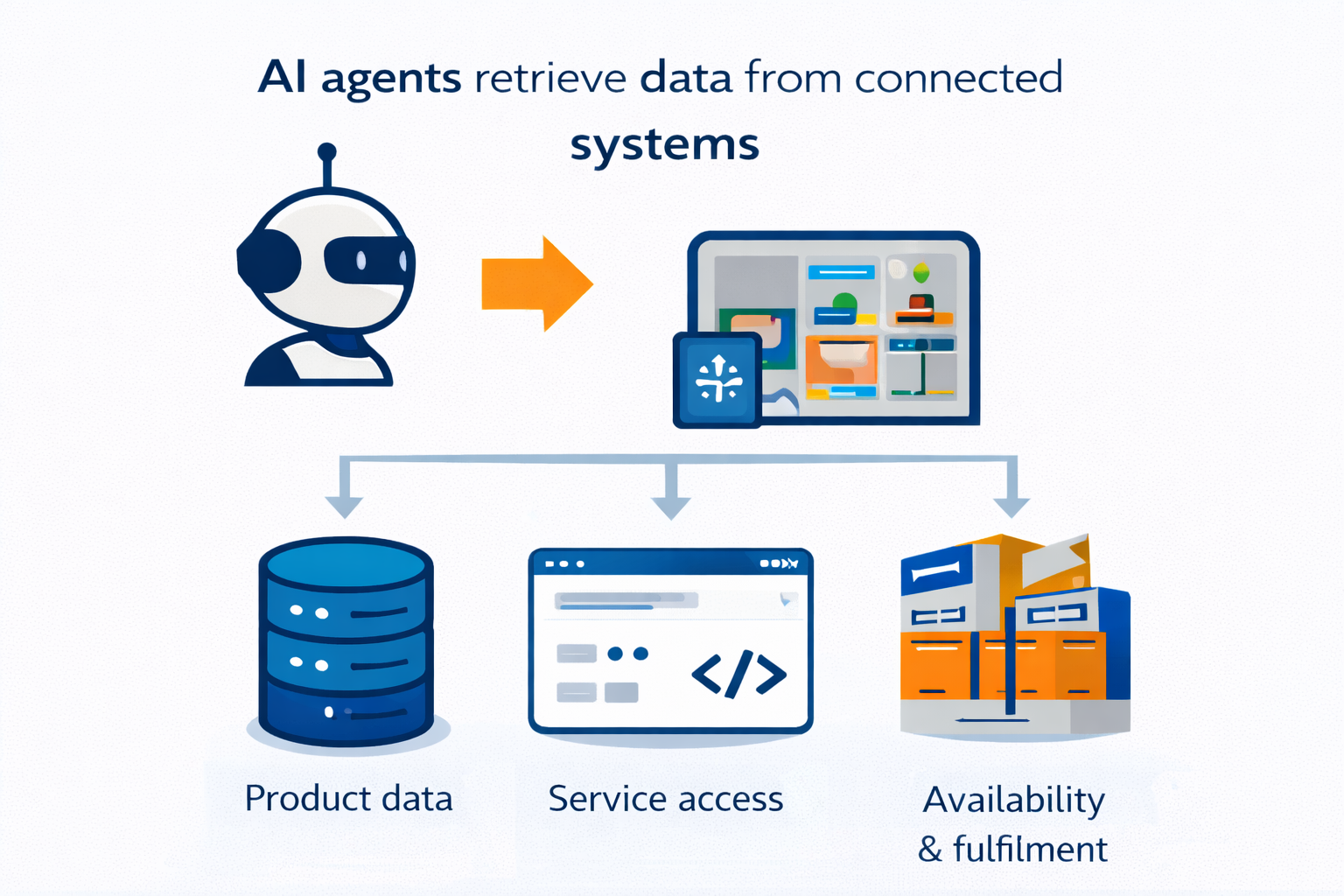

Retailer data therefore becomes even more significant. Retailers already hold enormously rich information - real-time pricing, stock levels, promotion mechanics, loyalty signals, purchase history. Today, that data powers the experience a customer sees on screen. But when AI agents are evaluating options on a shopper's behalf, this same data becomes the raw material agents consume to make decisions. Not banner ads or product imagery - but structured, reliable, up-to-date information about price, availability, attributes and relevance. Retailers who can expose this data cleanly will be better positioned. Those whose data is fragmented or locked in formats designed only for human interfaces may find their products are simply overlooked.

Retail media will also need to evolve. The current model is built around human attention - brands paying for sponsored placements, featured positions and banner ads that a shopper sees while browsing.

An AI agent won't notice any of that. Instead, influence may shift to the data layer: how products and offers are structured and prioritised within the information feeds agents rely on. The economics may be similar - brands paying for advantage - but the delivery mechanism would look fundamentally different.

For brands, this raises a pointed question: is my product agent-ready? Discoverability may increasingly depend not just on how products are presented to shoppers, but on how well product data is structured, how rich its attributes are, and how effectively retail systems surface that information to the agents acting on consumers' behalf.

Delivering that shift depends on how AI systems access and act on information in practice.

Agentic protocols and the new interface layer for commerce

A growing number of technology companies are starting to build services specifically for AI agents rather than human users. The reason is simple: AI systems are beginning to move from answering questions to completing tasks.

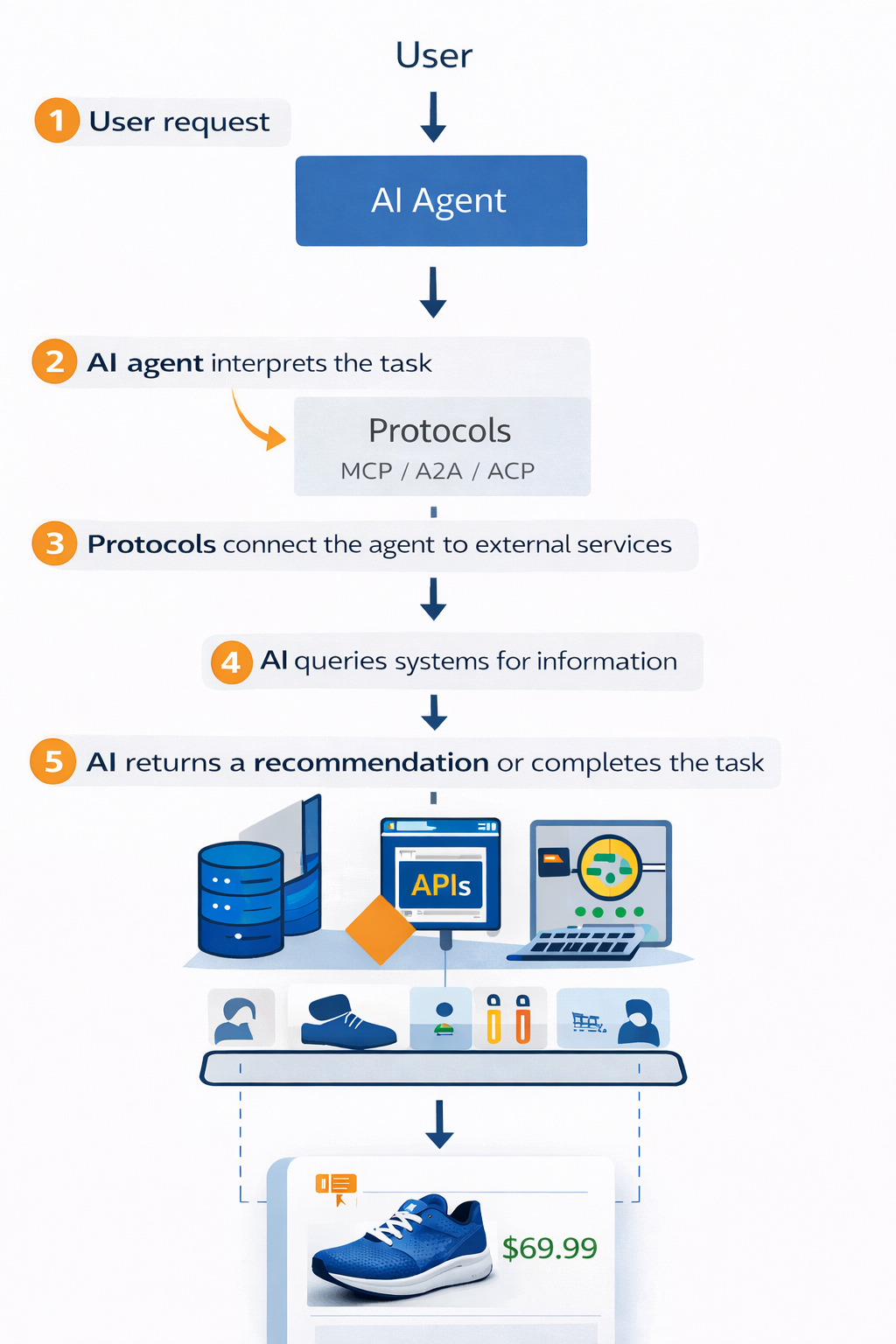

Instead of navigating websites, apps or search results themselves, users can increasingly ask AI agents to complete tasks on their behalf – from researching information to purchasing products. For those systems to work, however, they need a structured way to access data, tools and services across the internet. That is where a new set of protocols is beginning to emerge.

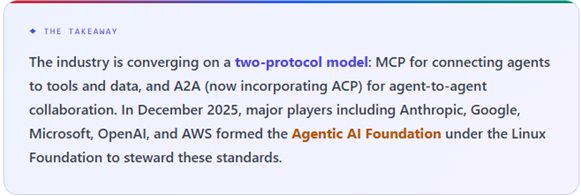

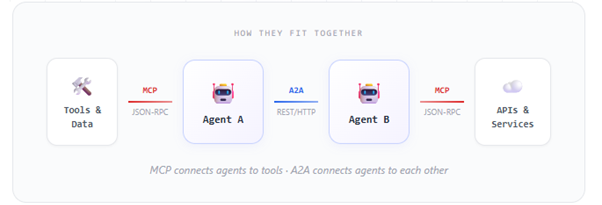

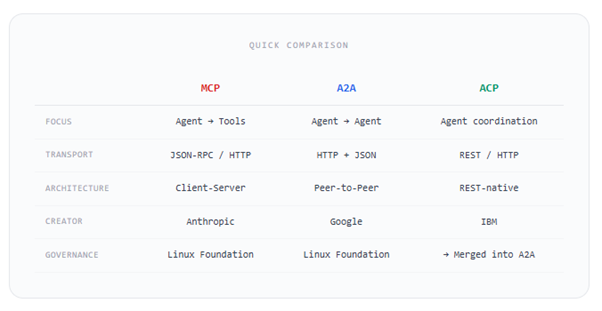

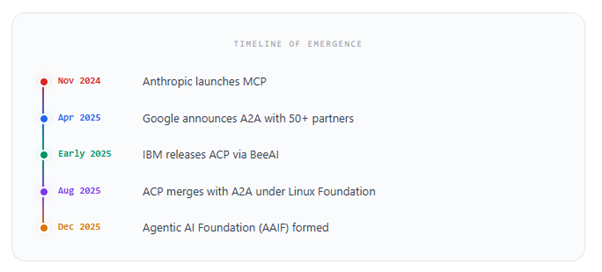

Frameworks such as MCP (Model Context Protocol), A2A (Agent-to-Agent) and ACP (Agent Communication Protocol) are designed to allow AI systems to retrieve information, trigger actions and interact directly with external services. Rather than simply generating responses, AI agents can query databases, access product catalogues, run workflows or execute transactions through these connections.

In effect, these protocols are beginning to define how AI systems interact with the digital world, including how they search for information, evaluate options and increasingly interact with commercial services.

APIs have long provided the underlying infrastructure connecting digital services together. They allow applications to exchange data, retrieve information and trigger functionality across different platforms. The protocols now emerging perform a similar role, but for AI systems rather than traditional applications. While the terminology varies, most fall into three broad categories:

1. Agent-to-tool protocols

For AI to be truly useful in a business context, it needs to do more than just answer questions - it needs to be able to reach into your existing systems, pull out data, and take action. A new wave of open standards is emerging to make this possible, and three are worth knowing about:

MCP (Model Context Protocol) - Developed by Anthropic (the company behind Claude), this is currently the most talked-about approach. In simple terms, MCP provides a common "language" that lets an AI assistant discover what tools and data sources are available to it, and then use them. Rather than building a bespoke connection for every system, MCP acts as a universal plug - one standard way for AI to talk to databases, software and services. It's gaining rapid adoption and is likely to become a foundational part of how AI-powered tools interact with business systems.

UTCP (Universal Tool Calling Protocol) - This takes a slightly different approach. Instead of routing everything through a central connector, UTCP lets AI agents talk directly to existing software interfaces using a standardised description of how each tool works. The goal is simplicity: fewer moving parts, with AI systems calling on tools in a more direct way.

TAP (Tool Abstraction Protocol) - Built by LangChain (a widely used AI development framework), TAP is focused on consistency. It aims to ensure that no matter which AI platform or framework you're using, tools are described and accessed in the same way - reducing the risk of fragmentation as the market evolves.

These protocols are still maturing, but they represent the ‘plumbing’ that will determine how easily AI agents can be embedded into real business workflows. Getting familiar with them now will help you make better decisions as the technology landscape takes shape.

2. Agent-to-agent communication protocols

As AI systems become more capable, we're moving beyond a single assistant doing a single job. Increasingly, the vision is for multiple specialised AI agents to work together - one might analyse sales data, another might optimise pricing, and a third might generate a recommendation for a buyer. For that to work, these agents need a reliable way to communicate and coordinate with each other.

Two protocols are leading this conversation:

A2A (Agent-to-Agent Protocol) - Introduced by Google, A2A provides a standard way for AI agents to discover each other, exchange information, and collaborate on tasks. The idea is straightforward: if you have several AI systems built by different providers or for different purposes, A2A gives them a shared way to work together rather than operating in isolation. Google's backing gives this significant momentum, and it's designed to complement the tool-connection protocols (like MCP) rather than replace them.

ACP (Agent Communication Protocol) - Developed through the BeeAI project and supported by the Linux Foundation, ACP is tackling a similar challenge but with a strong emphasis on openness. Its goal is to ensure that agents built using different platforms and frameworks can still interoperate — preventing a future where your AI tools can only collaborate if they happen to come from the same vendor.

Multi-agent systems are still early-stage for most businesses, but the direction of travel is clear. The retailers and brands that benefit most from AI will likely be those running coordinated networks of specialised agents - not just one general-purpose tool. These protocols will shape how easily that future comes together, and whether it remains open or becomes locked into specific technology ecosystems.

3. Supporting infrastructure and specifications

Beyond the headline protocols, a supporting layer of standards is emerging to handle some of the practical challenges of making AI agents work reliably in real-world settings. Two are worth flagging:

Agent.json - Think of this as an AI agent's business card. It's a proposed standard for how an agent describes itself — what it can do, what tools it can access, and how other systems should interact with it. Just as a website has a structured sitemap that search engines can read, Agent.json would give any AI agent a machine-readable profile that other agents and systems can instantly understand. It's a small but important building block: if agents can't clearly describe their own capabilities, coordinating them becomes much harder.

SLIM (Secure Low-Latency Interactive Messaging) - This tackles a different challenge: speed and reliability. When AI agents are working together or interacting with live business systems, they need to exchange information quickly and securely. SLIM is a transport framework designed specifically for this - ensuring that messages between agents and the systems they connect to are delivered in real time without compromising on security. As agents move from answering questions to taking actions (placing orders, adjusting prices, triggering alerts), this kind of responsive, secure communication layer becomes essential.

These supporting standards may sound like technical detail, but they address two fundamental questions any business will eventually face: how do we know what our AI agents can actually do? and can they operate fast and securely enough for real-time decision-making? Getting these foundations right is what separates a useful AI deployment from a fragile one.

A useful way to understand the potential consumer impact for retail is to look at how customers find and buy products today.

A typical journey involves searching for a product, scrolling through results, comparing prices, reading reviews, noticing promotions, and eventually making a purchase. Even a routine weekly shop involves dozens of small decisions - swapping brands, responding to offers, spotting something new. Every step happens on a screen designed for human browsing: search engines, retailer websites and apps.

This matters because each of those moments is a commercial touchpoint. Search rankings, sponsored placements, on-site promotions, loyalty offers, shelf positioning - the entire retail media and trade spend ecosystem is built around the assumption that a human is looking, choosing, and being influenced along the way. The current model runs on human attention, curiosity and impulse.

Agent-driven systems introduce a different model. Instead of navigating interfaces themselves, users may increasingly delegate tasks to AI systems that retrieve information, compare options and execute actions on their behalf.

Developers are already experimenting with this approach in commerce environments. For example, teams working on grocery-focused MCP servers are building services that allow AI agents to search product catalogues, assemble shopping baskets and execute purchases through structured queries rather than traditional browsing.

This is why some technologists are beginning to describe these protocols as the foundations of a new form of ‘search optimisation’. In an agent-mediated environment, discovery becomes dependent on whether systems can access, interpret and act on structured information.

In practical terms, services must become legible to machines as well as to people. For retailers and brands, this introduces a new layer of discoverability. Product information, pricing, availability and promotional signals need to be structured in ways that AI systems can retrieve and evaluate programmatically. The ability for an agent to query a service directly may become just as important as how prominently a product appears on a webpage. It will also be important for AI-systems to be able to access loyalty data to better personalise an automated process for a particular customer.

This does not mean human-driven interfaces disappear. Consumer behaviour rarely shifts overnight, and websites and apps will remain central for many years. But the infrastructure now being built suggests a parallel layer where AI systems can interact directly with services and data.

In that environment, influence can begin earlier in the process. Instead of competing solely for attention in visible interfaces, organisations may need to ensure that the systems representing their products and services are accessible, interpretable and prioritised within the decision processes of AI agents.

The protocols enabling this shift are still evolving, and their eventual standards are far from settled. However, the direction of travel is becoming clearer. The internet was originally built around human interfaces, while the emerging layer of AI protocols is designed for machine-to-machine interaction.

As these systems mature, the competitive question may shift. Visibility to consumers will still matter, but organisations may increasingly need to ensure their products, services and data can be discovered and understood by the systems acting on a consumer’s behalf.

Find out more about Model Context Protocols here.